Private and Secure Distributed Matrix Multiplication

Machine learning on big data sets takes a significant amount of computational power, so it is often necessary to offload some of the work to external distributed systems, such as an Amazon EC2 cluster. It is useful to be able to utilize external resources for computation tasks while keeping the actual data private and secure. In particular, matrix multiplication is an essential step in many machine learning processes, but the owner of the matrices may have reasons to keep the actual values protected.

In this post, we’ll discuss four works about secure distributed computation. First, we’ll talk about a method of using MDS (maximum distance separable) error correcting codes to add security and privacy to general data storage (“Cross Subspace Alignment and the Asymptotic Capacity of X-Secure T-Private Information Retrieval” by Jia, Sun, Jafar).

Then we’ll discuss method of adapting a coding strategy for straggler mitigation (“Polynomial codes: an optimal design for high-dimensional coded matrix multiplication” by Yu, Qian, Maddah-Ali, Avestimehr) in matrix multiplication to instead add security or privacy (“On the capacity of secure distributed matrix multiplication” by Chang, Tandon and “Private Coded Matrix Multiplication” by Kim, Yang, Lee)

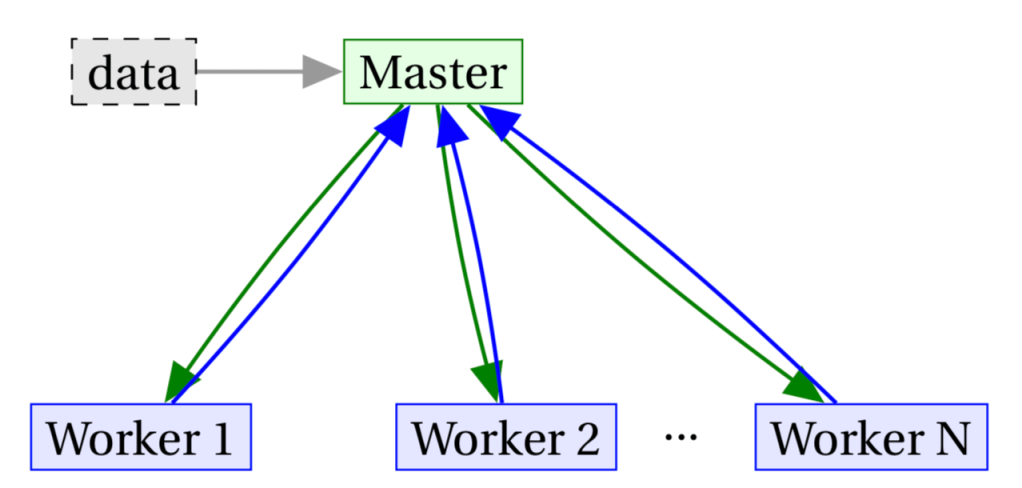

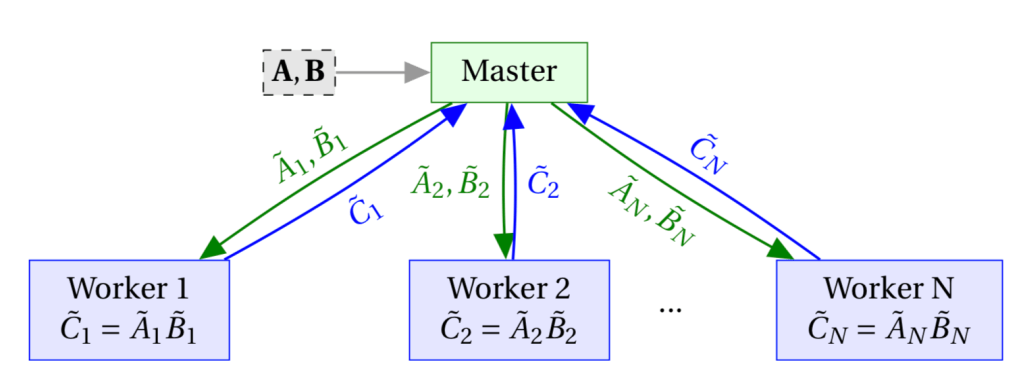

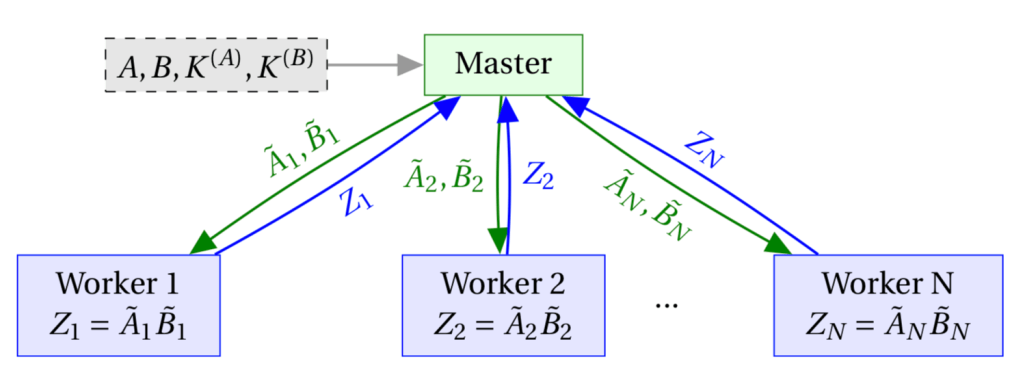

Throughout this post we will use variations on the following communication model:

The data in the grey box is only given to the master, so workers only have access to what they receive (via green arrows). Later on we will also suppose the workers have a shared library not available to the master. The workers do not communicate with each other as part of the computation, but we want to prevent them from figuring out anything about the data if they do talk to each other.

This model is related to private computation but not exactly the same. We assume the servers are “honest but curious”, meaning they won’t introduce malicious computations. We also only require the master to receive the final result, and don’t need to protect any data from the master. This is close to the BGW scheme ([Ben-Or, Goldwasser, Wigderson ’88]), but we do not allow workers to communicate with each other as part of the computation of the result.

We consider unconditional or information-theoretic security, meaning the data is protected even if the workers have unbounded computational power. Furthermore, we will consider having perfect secrecy, in which the mutual information between the information revealed to the workers and the actual messages is zero.

X-Secure T-Private Information Retrieval

Before we get into matrix-matrix multiplication, consider the problem of storing information on the workers to be retrieved by the master, such that it is “protected.” What do we mean by that? [Jia, Sun, and Jafar ’19] define X-secure T-private information retrieval as follows:

Let

be a data set of messages, such that each

consists of

random bits. A storage scheme of

on

nodes is

1. X-secure if any set of up to

servers cannot determine anything about any

and

2. T-private if given a query from the user to retrieve some data element

[Jia, Sun, and Jafar ’19], any set of up to

users cannot determine the value of

.

Letting be the set of queries sent to each node and

be the information stored on each node (all vectors of length L), we depict this as:

The information theoretic requirements of this system to be correct can be summarized as follows (using notation for set

):

| Property | Information Theoretic Requirement |

| Data messages are size | |

| Data messages are independent | |

| Data can be determined from the stored information | |

| User has no prior knowledge of server data | |

| X-Security | |

| T-Privacy | |

| Nodes answer only based on their data and received query | |

| User can decode desired message from answers |

Given these constraints, Jia et al. give bounds on the capacity of the system. Capacity is the maximum rate achievable, where rate is defined as bits requested by the worker (, the length of a single message) divided by the number of bits downloaded by the worker. The bounds are in terms of the capacity of T-Private Information Retrieval, (which is the same as the above definition, with only requirement 2).

If

then for arbitrary

,

.

When

:

[Jia, Sun, and Jafar ’19]

Jia et al. give schemes that achieve these bounds while preserving the privacy and security constraints by introducing random noise vectors into how data is stored and queries are constructed. The general scheme for uses cross subspace alignment, which essentially chooses how to construct the stored information and the queries such that the added noise mostly “cancels out” when the master combines all the response from the servers. The scheme for

is straightforward to explain, and demonstrates the idea of using error correcting codes that treat the random values as the message and the actual data as the “noise.”

For this scheme, the message length is set to

(the number of nodes

, minus the maximum number of colluding servers

). First, we generate

random bit vectors of length

:

Next, apply an MDS code to

to get

, which are

encoded vectors of length

:

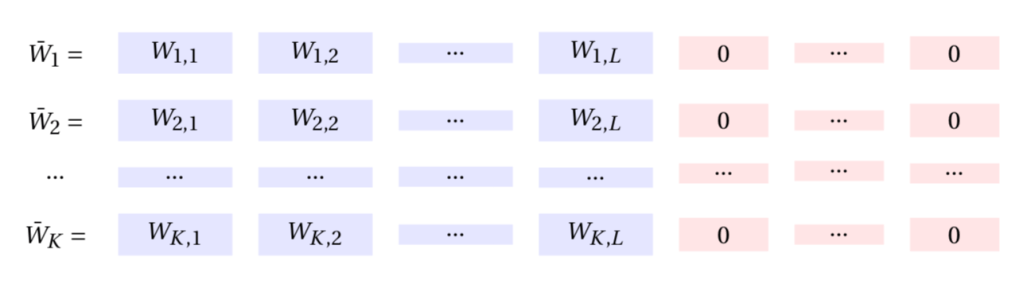

For our data , we pad each vector with zeros to get

of length

:

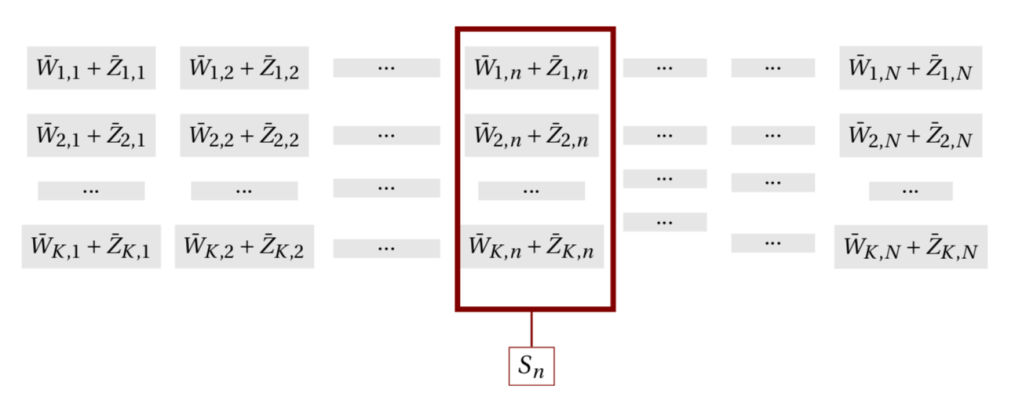

Now that the dimensions line up, we can add the two together and store each column at the

node:

To access the data, the user downloads all bits. The length

string downloaded from row

can be used to decode

:

are all zero, so columns

through

have the values of

. This gives the user

values from the MDS code used on each row, so they can decode and get

and

. Then a subtraction from the downloaded data gives

. Because of the MDS property of the code used to get

, this scheme is X-secure and because the user downloads all bits, it is T-private.

Matrix Multiplication with Polynomial Codes

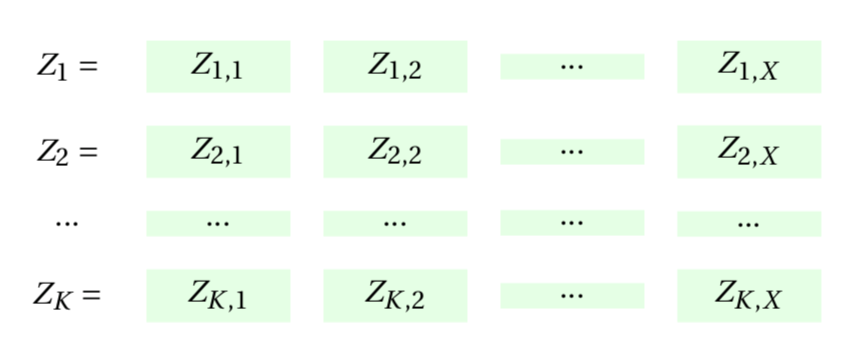

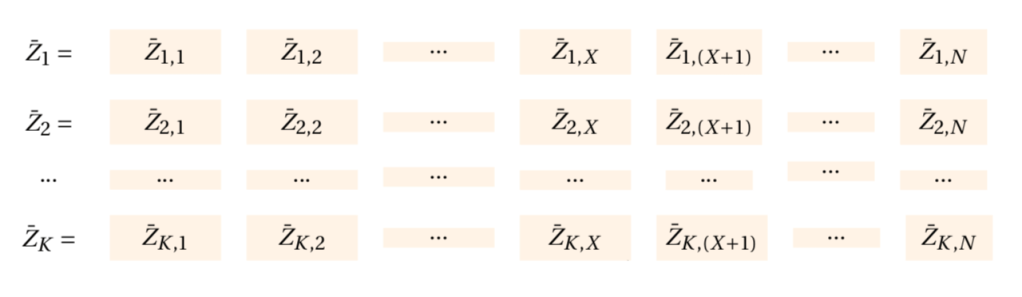

We now move on to the task of matrix-matrix multiplication. The methods for secure and private distributed matrix multiplication we will discuss shortly are based on polynomial codes, used by [Yu, Maddah-Ali, Avestimehr ’17] for doing distributed matrix multiplications robust to stragglers. Suppose the master has matrices and

for some finite field

, and

. Assume

and

are divisible by

, so we can represent the matrices divided into

submatrices:

and

So to recover , the master needs each entry of:

The key idea of polynomial codes is to encode and

in polynomials

and

to be sent to the

worker, where they are multiplied and the result is returned. The goal of Yu et al. was to create robustness to stragglers, and so they add redundancy in this process so that not all workers need to return a result for the master to be able to determine

. In particular, only

returned values are needed, so

servers can be slow or fail completely without hurting the computation. This method can be thought of as setting up the encodings of

and

so that the resulting multiplications

are evaluations of a polynomial with coefficients

at

different points — equivalent to a Reed-Solomon code.

This idea is adapted by [Chang, Tandon ’18] to protect the data from colluding servers: noise is incorporated into the encodings such that the number of encoded matrices required to determine anything about the data is greater than the security threshold . Since the master receives all

responses it is able to decode the result of

, but no set of

nodes can decode

,

, or

. Similarly, [Kim, Yang, Li ’19] adapts this idea to impose privacy on a matrix-matrix multiplication: workers are assumed to have a shared library

, and the user would like to multiply

for some

without revealing the value of

to the workers. The workers encode the entire library such that when the encoding is multiplied by an encoded input

from the master, the result is useful to the master in decoding

.

Chang and Tandon consider the following two privacy models, where up to servers may collude. The master also has

(and in the second model,

), which are matrices of random values with the same dimensions as

(and

). These are used in creating the encodings

(and

).

is public,

is private:

Both private:

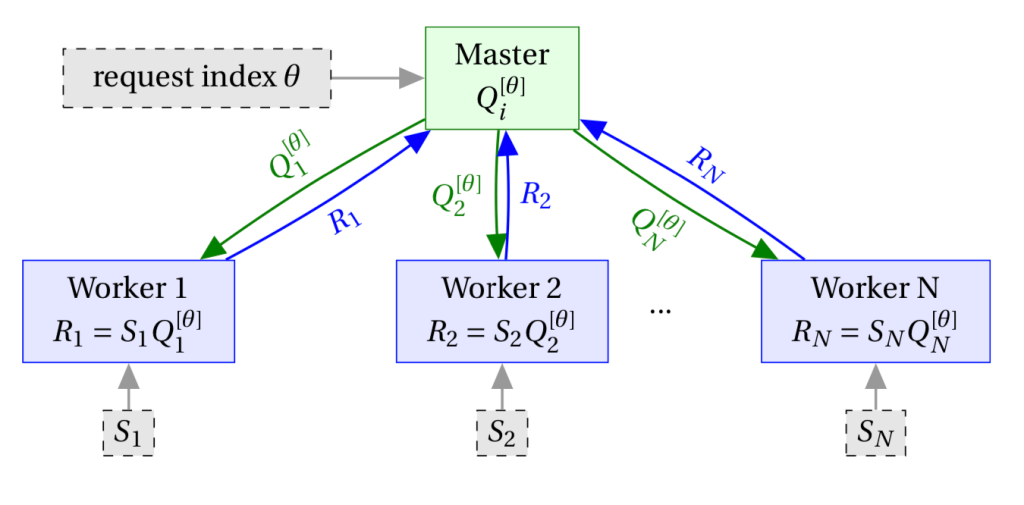

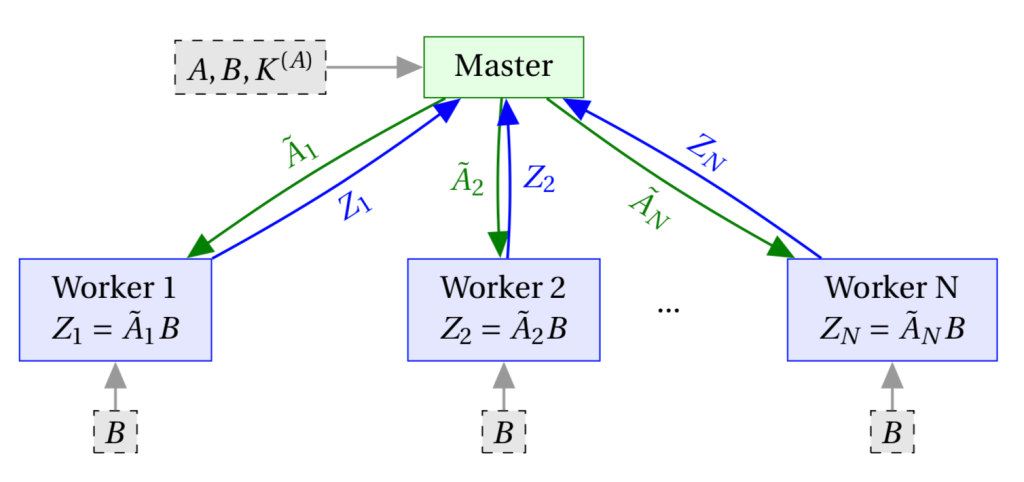

Kim, Yang, and Lee take a similar approach of applying the method of polynomial code to private matrix multiplication. As before, there are workers, but now the master wants to multiply

with some

in shared library

(all the workers have the shared library).

Since the master isn’t itself encoding it has to tell the workers how to encode the library so that it can reconstruct the desired product. This is done by having the master tell the workers what values of

they should use to evaluate the polynomial that corresponds to encoding each library matrix. We denote the encoding of the library done by each worker

as the multivariate polynomial

which is evaluated at

and the node-specific vector

to get the node’s encoding,

. The worker multiplies this with the encoding of

it receives,

and returns the resulting value

. All together, we get the following communication model:

Conclusion

As we’ve seen, coding techniques originally designed to add redundancy and protect against data loss can also be used to intentionally incorporate noise for data protection. In particular, this can be done when out-sourcing matrix multiplications, making it a useful technique in many data processing and machine learning applications.

References:

- Jia, Zhuqing, Hua Sun, and Syed Ali Jafar. “Cross Subspace Alignment and the Asymptotic Capacity of X-Secure T-Private Information Retrieval.” IEEE Transactions on Information Theory 65.9 (2019): 5783-5798.

- Yu, Qian, Mohammad Maddah-Ali, and Salman Avestimehr. “Polynomial codes: an optimal design for high-dimensional coded matrix multiplication.” Advances in Neural Information Processing Systems. 2017.

- Chang, Wei-Ting, and Ravi Tandon. “On the capacity of secure distributed matrix multiplication.” 2018 IEEE Global Communications Conference (GLOBECOM). IEEE, 2018.

- Kim, Minchul, Heecheol Yang, and Jungwoo Lee. “Private Coded Matrix Multiplication.” IEEE Transactions on Information Forensics and Security (2019).

Leave a comment