A few lessons from the history of multiparty computation

As part of my Ph.D. qualifying exam, I gave a survey talk on some of the recent progress in practical multi-party computation (MPC). After the talk, Omer Reingold asked me why so much progress on MPC happened in the last decade or so, given that the main theoretical groundwork for multiparty computation was laid out in the 1980s. Omer’s question got me interested, so I looked at some MPC papers from over the years and talked to a few people who have been working on MPC for some time now. In this post, I want to share some of what I’ve learned about the history of the progress of MPC, a few answers to Omer’s question, and potentially a few takeaways on progress in science more broadly.

To give a bit of background, MPC is one of the most fundamental problems in cryptography. In particular, MPC protocols allow a set of parties, each of which holds its private input, to compute a joint function over their inputs, in a way that protects the confidentiality and integrity of the computation from an adversary that controls a subset of the parties and the communication channels. Defining this precisely requires a lot of care, yet for now, you can think of an MPC protocol as being secure, if the adversary can only cause as much damage as it can in an “ideal world” in which the computation is performed with the aid of a trusted incorruptible party. Intuitively, in such an ideal world, the adversary cannot do much more than choose its inputs, modify its output, or choose not to participate in the computation at all.

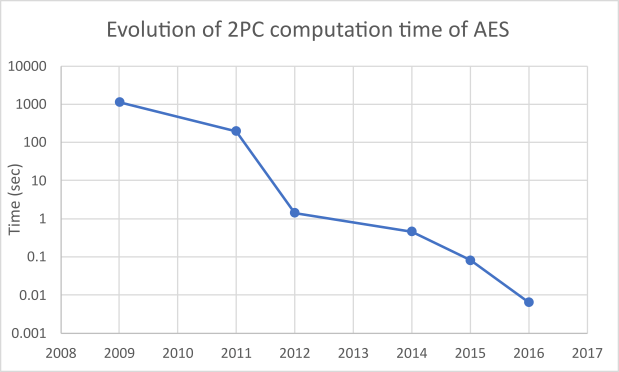

In the 1980s, a sequence of works initiated the study of multiparty computation and established very powerful and general results, showing the feasibility of constructing protocols for securely computing any multi-party functionality in a variety of settings. Those results were mostly theoretical. In contrast, the last 15 years saw a growing interest in MPC from a more practical perspective. To put this rising interest in context, consider the following two graphs (data for the graphs is here).

This graph shows the time of a two-party secure computation of the AES block cipher over the years. That is the time it takes to evaluate AES(k,x), where one party holds a 128-bit key k to the block cipher, the other party holds a 256-bit data block x, and the output is 256-bit long. The measurements are from the original papers. There are different variants of this “benchmark”, in terms of the number of parties (2PC/MPC), the number of corrupted parties (honest/dishonest majority), precise security model (semi-honest/fully malicious), hardware, network (LAN/WAN), and cost model (single/batch execution), but, for concreteness, the graph only includes results in the batch-evaluation setting in the fully malicious two-party setting.

The second graph shows the number of papers on two-party computation, over the years. This is not a very well-defined characterization, but for simplicity, I looked at all the papers referenced from the relevant chapters in the book on MPC by Evans, Kolesnikov, and Rosulek. Admittedly, these are not all the papers in the area, there might be valuable indirect contributions that are missing, and, more importantly, the number of papers is a bit of a superficial metric. Yet, even with these caveats, I think this is helpful to get a general picture. On top of the flurry of academic research, MPC has also been thriving in the industry, with companies like Curv, Partisia, Sharemind, Unbound, and others building MPC-based products and services.

The first graph shows quite impressive progress. In less than a decade, the time to securely compute AES in the two-party setting decreased by more than 60,000x, from more than 10 minutes to less than 10 milliseconds. (For reference, in the same period, CPU speeds increased roughly twofold, and typical local-area network speeds increased from 1GB/S to 10GB/S.) The second graph illustrates the increase in the volume of research, which has enabled this progress. This includes advances such as various increasingly efficient garbling techniques, more efficient cut–and–choose techniques for malicious security, protocols based on information-theoretic message-authentication codes, homomorphic encryption, and many others. (See the EKR book for an overview of these techniques and more references.) The second graph also clearly shows the inflection point that happened about 15 years ago. This inflection point illustrates the premise of the question that has triggered this blog post.

I use the term inflection point, since progress in MPC never really stopped between the late 80s and the mid-2000s, and there was a lot of research in the interim period. For example, oblivious transfer–one of the fundamental primitives used to build MPC–received a lot of attention and saw significant progress. There have been many works developing the theoretical framework of secure computation, studying secure composition, and reducing the round complexity of secure multiparty computation protocols. Other works developed special-purpose secure computation protocols for concrete applications such as secure auctions and private information retrieval. Many of the techniques and ideas in these works were instrumental for the more recent progress.

Having said that, there still seems to have been a dip in the attention devoted to MPC for a certain period. One explanation that I’ve found interesting is that the powerful feasibility results from the early days made the problem seem “solved” from a theoretical point of view. As a result, interest in MPC within the theory community decreased. It took time before the interest ramped up again, this time more within the more-practically oriented crypto community. (Interestingly, since then, MPC found renewed interest in the theoretical cryptography community as well.)

One work that, to me, is a key point in the transition of MPC from theory to practice is the 2004 Fairplay paper. This was the first full-fledged system that implemented generic secure function evaluation. I believe that, together with other early implementations of MPC-based systems (such as the system developed for the Danish sugar-beet auction and the Sharemind system), it played a pivotal role in accelerating practical research. I view it as a lesson on the value of “first systems”: they help to identify bottlenecks and discover new concerns, which are hard to predict without building an actual system. Moreover, such systems put “theory problems” on the radar of practitioners and demonstrate which ideas are closer to being practical. In some sense, a system is an evidence that “the constants in the big-O have been worked out…and they are not astronomical.” Furthermore, when code is made public, follow-up works can build upon an initial system and improve on it more rapidly. A first system can mark the transition of an idea from pure theory to practice. It’s still fair to ask what determines the timing of the appearance of such a first system. One answer is that it often takes the right type of collaboration of researchers from different research communities, as seems to have been the case with “Fairplay”.

I think that external factors also played a role in the timing of events. Specifically, the growth of the Internet and the demand for security on the Internet stimulated a lot of interest and progress in applied cryptography. The late 1990s saw large-scale adoption of public-key cryptography with the successful development and deployment of SSL. More complex Internet applications began to appear later, including those that are inherently security-sensitive, like e-commerce. These applications stimulated interest in more advanced cryptographic tools, MPC being one of them. Indeed, MPC papers from that period discuss new Internet-driven applications as part of their motivation.

A final factor that I want to mention ties back to the first graph above. The fact that many works demonstrate their performance by measuring AES evaluation is, to me, an example of the value of standardized benchmarks. Such benchmarks help to evaluate new techniques and ideas within a certain area and can help progress on a particular dimension, partly because of the competitive element they add. As with any measurement, there is a risk of overfitting to the benchmark, rather than focusing on the true goal, but I think they are very helpful overall.

I am sure there are other valuable lessons to be learned from this case study. Moreover, I am quite certain that folks with more experience in the area have a much better perspective on the history of these developments than I do. I will be very happy to hear other perspectives!

Acknowledgements: I would like to thank my quals committee, Dan Boneh, Omer Reingold, and Mary Wootters, for an interesting discussion that has led to this blog post. I am grateful to Yuval Ishai and Benny Pinkas for interesting conversations on the progress of MPC and to Henry Corrigan-Gibbs and Saba Eskandarian for providing me with helpful comments.

Leave a comment